Behind every AI flashcard app is a stack of technologies that would have seemed like science fiction a decade ago. Natural language processing, computer vision, speech recognition, and machine learning algorithms work together to transform raw information into optimized learning experiences. But how exactly does this all fit together? And what separates a truly intelligent flashcard system from one that merely slaps "AI" on its marketing page?

In this deep dive, we will disassemble the technology stack behind modern AI flashcard applications, explore how they generate cards from virtually any source material, and examine the spaced repetition algorithms that determine precisely when you should review each card. Whether you are a student evaluating tools or a developer curious about the underlying systems, this article will give you a clear, technical understanding of how these apps actually work.

The AI Flashcard App Technology Stack

A modern AI flashcard app is not a single technology but rather an orchestration of multiple AI subsystems, each handling a different stage of the learning pipeline. Understanding these components helps explain why some apps produce dramatically better results than others.

The core technology stack typically includes four layers:

- Input Processing Layer: Natural Language Processing (NLP), Optical Character Recognition (OCR), Computer Vision, and Automatic Speech Recognition (ASR) work together to ingest source material in any format -- PDFs, images, audio, video, or plain text.

- Knowledge Extraction Layer: Large language models and semantic analysis engines identify key concepts, relationships, and testable facts from the processed input.

- Card Generation Layer: AI constructs question-answer pairs, cloze deletions, and multi-modal cards that follow evidence-based flashcard design principles.

- Review Optimization Layer: Spaced repetition algorithms -- powered by machine learning models trained on millions of review sessions -- determine the optimal moment to present each card for review.

What makes this stack powerful is the feedback loop between layers. When a user struggles with a card during review, that signal propagates back through the system: the review optimizer adjusts scheduling, the card generator may create supplementary cards targeting the weak concept, and the knowledge extraction layer refines its understanding of what constitutes "difficult" material for that particular learner.

How AI Generates Flashcards from Any Source Material

The most visible feature of an AI flashcard app is its ability to transform arbitrary content into study-ready cards. This process varies significantly depending on the input format, and each pathway involves distinct technical challenges.

From PDFs and Documents (NLP + Layout Analysis)

PDFs are the most common input format for students and professionals, but they are also one of the trickiest for AI to handle. Unlike plain text, PDFs encode visual layout rather than semantic structure. A PDF does not inherently know what is a heading, what is body text, and what is a footnote -- it only knows coordinates and fonts.

The processing pipeline for documents typically follows these steps:

- Layout analysis: ML models trained on document structures identify headers, paragraphs, tables, figures, and captions. Modern systems use transformer-based models that can distinguish a chapter title from a sidebar note based on visual context, not just font size.

- Text extraction and chunking: Once structure is identified, the system extracts text in semantic chunks -- keeping related paragraphs together rather than splitting mid-sentence at page breaks.

- Concept identification: NLP models analyze each chunk to identify key terms, definitions, cause-effect relationships, comparisons, and processes. Named entity recognition flags important people, dates, and technical terms.

- Card generation: The system creates cards targeting identified concepts. A definition becomes a "What is X?" card. A cause-effect relationship becomes "What causes X?" and "What results from Y?" A comparison table generates multiple cards contrasting features.

The quality difference between apps often comes down to step three. Basic systems extract sentences verbatim and slap a question mark on them. Advanced systems like those covered in our comprehensive guide understand context deeply enough to generate cards that test understanding rather than mere recognition.

From Images and Photos (OCR + Vision Models)

Image-based card generation combines two AI capabilities: OCR to extract text from photos (handwritten notes, whiteboard captures, textbook pages), and vision models to understand diagrams, charts, and visual content.

Modern OCR has advanced far beyond simple character recognition. Systems now handle:

- Handwriting recognition: Neural networks trained on millions of handwriting samples can read most handwritten notes with over 95% accuracy.

- Multi-language detection: Automatic identification and processing of text in multiple languages within the same image.

- Mathematical notation: Specialized models recognize formulas, equations, and chemical structures that standard OCR systems cannot parse.

- Diagram interpretation: Vision-language models can describe flowcharts, anatomical diagrams, and circuit diagrams, generating cards that test understanding of visual relationships.

The practical impact is significant. A medical student can photograph an anatomy textbook page and receive cards that test both the labeled structures and their functional relationships -- something that would take 30 minutes to create manually but happens in seconds with AI.

From Videos and Audio (ASR + Summarization)

Video and audio processing adds temporal complexity to the pipeline. A one-hour lecture contains far more information than can or should become flashcards, so the summarization stage is critical.

The processing flow works as follows:

- Speech-to-text conversion: ASR models transcribe spoken content. State-of-the-art systems achieve word error rates below 5% for clear speech and handle multiple speakers, technical vocabulary, and accented English.

- Speaker diarization: In lecture or interview settings, the system identifies who is speaking when, which helps distinguish questions from answers, main points from tangents.

- Topic segmentation: NLP models divide the transcript into topical segments, identifying when the speaker transitions from one concept to another.

- Key point extraction: Summarization models identify the most important and testable points within each segment, filtering out filler words, repetition, and tangential remarks.

- Card creation with timestamps: Generated cards can link back to the specific moment in the video where the concept was discussed, enabling learners to review the original explanation if needed.

This capability is particularly valuable for students who rely heavily on lecture recordings, as it transforms passive listening into active recall material.

From Plain Text (Semantic Extraction)

Plain text input might seem simpler, but it actually demands the most sophisticated NLP. Without layout cues or visual context, the system must rely entirely on language understanding to identify what is worth learning.

Semantic extraction from text involves:

- Importance ranking: Not every sentence deserves a flashcard. ML models trained on educational content learn to distinguish core concepts from supporting details, examples, and transitions.

- Relationship mapping: The system builds a knowledge graph of concepts and their relationships, ensuring generated cards cover the full conceptual landscape rather than clustering around a single subtopic.

- Difficulty estimation: Based on concept complexity, prerequisite knowledge, and vocabulary level, the system estimates how challenging each card will be, which feeds into the spaced repetition scheduler.

- Gap detection: Advanced systems identify when the source material implies knowledge that is not explicitly stated and can generate prerequisite cards to fill those gaps.

The Spaced Repetition Engine -- How Review Timing Is Optimized

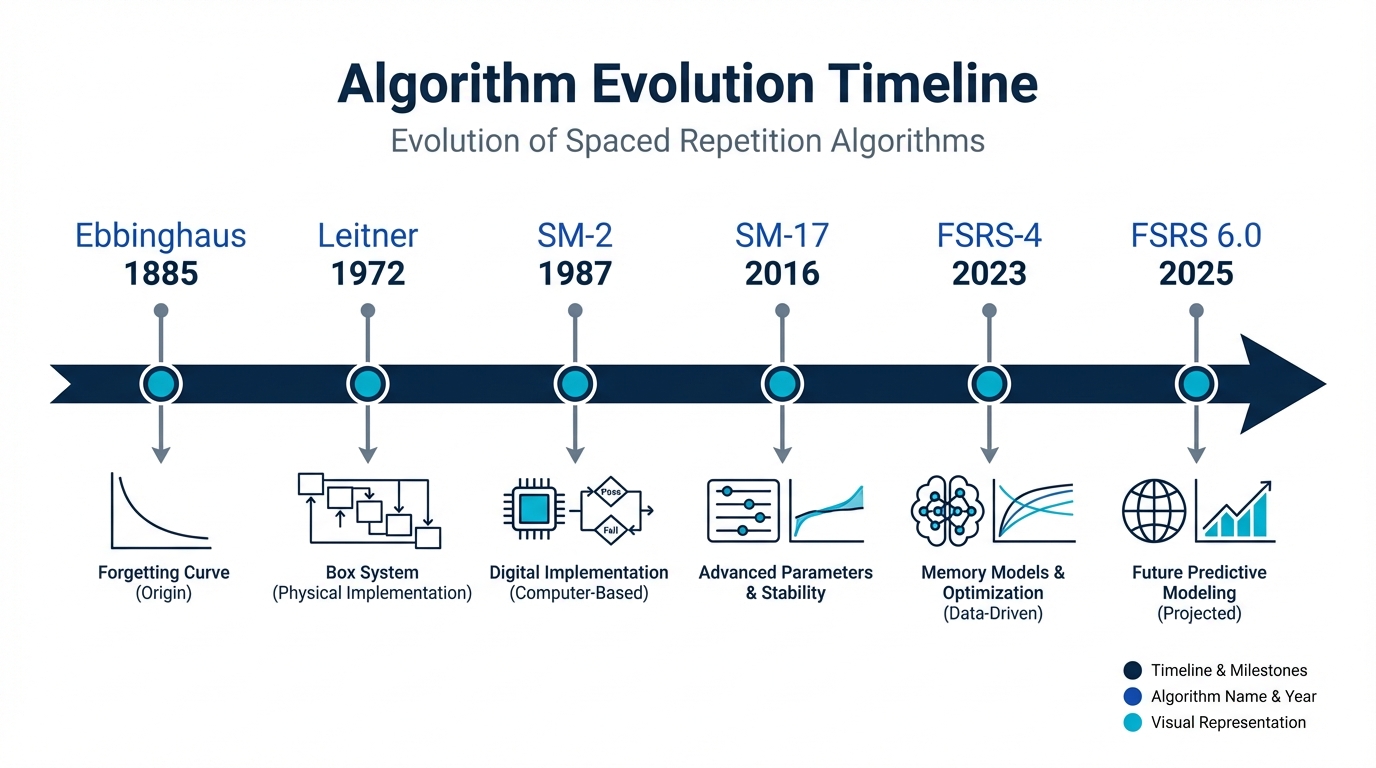

Card generation is only half the equation. The other half -- arguably the more important half -- is determining when each card should be reviewed. This is the domain of spaced repetition algorithms, and the field has evolved dramatically over the past 140 years.

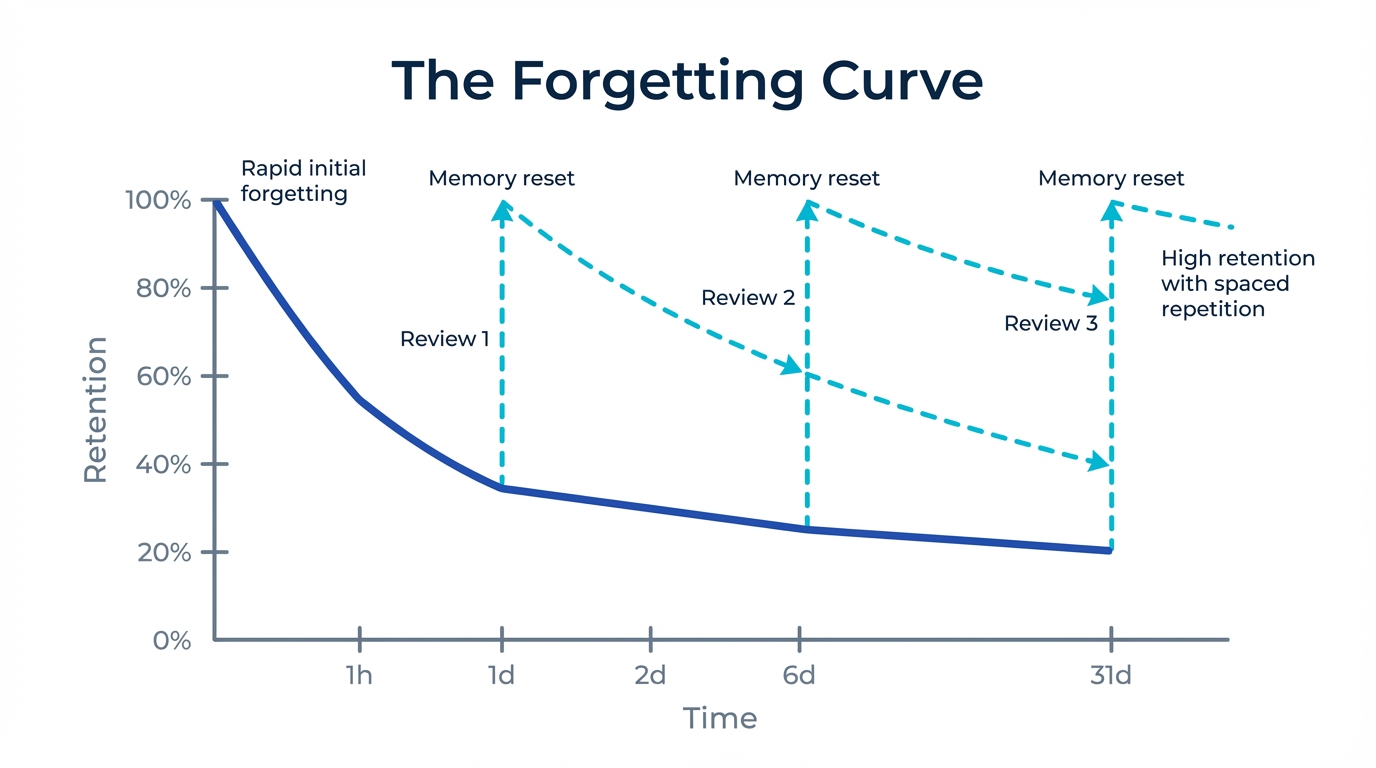

The Forgetting Curve (Ebbinghaus 1885)

The theoretical foundation for all spaced repetition systems traces back to Hermann Ebbinghaus, a German psychologist who in 1885 published his groundbreaking research on memory. By memorizing lists of nonsense syllables and testing his own recall at various intervals, Ebbinghaus quantified what we now call the "forgetting curve."

His key findings remain foundational:

- Memory retention drops exponentially after learning, with the steepest decline occurring in the first hour.

- After 24 hours, approximately 67% of learned material is forgotten without review.

- After one month, retention drops to roughly 21%.

- However, each successful review "resets" the curve at a shallower angle, meaning the interval before the next forgetting event grows progressively longer.

This final point is the critical insight that makes spaced repetition work: by timing reviews at the moment just before a memory would fade, each review strengthens the memory more than the last. Five or six well-timed reviews can transfer information from fragile short-term memory to durable long-term storage.

SM-2: The Algorithm That Started It All (1987)

While Ebbinghaus identified the principle, it took over a century before someone built a practical algorithm around it. In 1987, Polish researcher Piotr Wozniak developed the SM-2 (SuperMemo 2) algorithm, which became the foundation for virtually every spaced repetition system that followed -- including Anki, the most widely used flashcard application in the world.

SM-2 works by assigning each card an "easiness factor" (EF) based on user self-reported difficulty ratings (typically a 0-5 scale). The algorithm then calculates the next review interval using a simple formula:

| Review Number | Interval Calculation |

|---|---|

| 1st review | 1 day |

| 2nd review | 6 days |

| 3rd+ review | Previous interval x EF |

SM-2 was revolutionary for its time and remains remarkably effective. However, it has significant limitations:

- Self-reported difficulty is subjective: Two users rating the same difficulty level may have very different actual retention probabilities.

- One-size-fits-all starting intervals: Everyone starts with the same 1-day and 6-day intervals regardless of material difficulty or learner ability.

- No cross-card learning: The algorithm treats each card independently, missing patterns like "this user struggles with all cards related to organic chemistry."

- No adaptation to learning context: Time of day, study session length, and other contextual factors are ignored.

For a deeper comparison of how AI-enhanced systems improve upon traditional Anki-style approaches, these limitations become the starting point for the next generation of algorithms.

FSRS 6.0: The Machine Learning Revolution (2025)

The Free Spaced Repetition Scheduler (FSRS) represents the most significant advancement in spaced repetition algorithms since SM-2. Developed by Jarrett Ye and now in its sixth major version, FSRS 6.0 replaces SM-2's simple formula with a machine learning model trained on hundreds of millions of review records.

FSRS 6.0 introduces several transformative improvements:

- Predictive recall probability: Instead of relying on subjective difficulty ratings, FSRS calculates the mathematical probability that you will recall a card at any given moment, based on your complete review history.

- Personalized parameters: The algorithm learns 19 individual parameters from your review data, adapting to your specific memory characteristics. Some people have naturally stronger initial memory formation; others retain information longer after the first review. FSRS captures these differences.

- Same-day review handling: Unlike SM-2, which was designed for once-daily reviews, FSRS models intra-day memory dynamics, crucial for users who study multiple sessions per day.

- Reduced review burden: Benchmark studies show FSRS schedules 20-40% fewer reviews than SM-2 while maintaining the same target retention rate, because its predictions are more accurate.

Memly was among the first applications to implement FSRS 6.0 as its core scheduling engine. For a detailed technical breakdown of the algorithm and its real-world performance, see our article on the FSRS 6.0 algorithm implementation.

Personalization: How AI Adapts to Your Learning Patterns

Beyond the spaced repetition algorithm itself, modern AI flashcard apps build comprehensive learner models that personalize the entire experience. This goes far beyond simply adjusting review intervals.

The personalization engine typically tracks and adapts to:

- Circadian learning patterns: Research shows memory consolidation varies by time of day. AI systems detect when you perform best and can suggest optimal study windows. If your accuracy consistently drops after 10 PM, the app knows to schedule demanding new material for morning sessions.

- Session length optimization: Some learners maintain focus for 45-minute sessions; others fade after 15 minutes. The AI monitors accuracy decay within sessions and recommends break points before performance degrades.

- Difficulty calibration: The system learns which types of content challenge you most. If you consistently struggle with date-based facts but breeze through conceptual questions, the algorithm adjusts difficulty estimates for each content type independently.

- Learning velocity tracking: How quickly you acquire new cards versus how well you retain old ones determines the optimal balance of new versus review material in each session.

- Interference detection: AI can identify when similar cards interfere with each other (a well-documented phenomenon in memory research) and adjust scheduling to space out confusable items.

The result is a learning experience that feels increasingly intuitive over time. After a few weeks of consistent use, the app understands your memory characteristics better than you do yourself -- and schedules reviews accordingly.

The Quality Question: How Accurate Are AI-Generated Cards?

AI-generated flashcards have improved dramatically, but they are not perfect. Understanding where AI excels and where it falls short is important for using these tools effectively.

Where AI excels:

- Factual extraction: AI is highly reliable at identifying and creating cards for explicit facts -- definitions, dates, formulas, vocabulary, and named processes. Accuracy rates for factual extraction from well-structured source material exceed 95% in modern systems.

- Comprehensive coverage: AI does not get tired or bored. It will create cards for every testable concept in a 200-page textbook, catching details a human might skip over during manual card creation.

- Consistent formatting: AI-generated cards follow uniform patterns, making them easier to review than hand-made cards that vary in style and specificity.

- Speed: What takes hours manually takes seconds with AI. A 50-page chapter can yield 150-300 high-quality cards in under a minute.

Where AI still struggles:

- Nuanced understanding: AI may miss subtle implications, cultural context, or discipline-specific conventions that an expert would catch.

- Prioritization: While AI can rank importance, it does not always know what will be on the exam. A human who has taken the course before might prioritize differently.

- Creative connections: The best flashcards often draw unexpected connections between concepts. AI tends to create cards within the scope of the source material rather than linking to outside knowledge.

- Hallucination risk: Large language models can occasionally generate plausible-sounding but factually incorrect content. Quality systems include verification steps, but users should review generated cards for accuracy.

The practical recommendation is to use AI generation as a starting point, then spend 5-10 minutes reviewing and editing the generated deck. This hybrid approach -- AI for speed, human for quality control -- typically produces results superior to either purely manual or purely automated creation.

FAQ

How does an AI flashcard app differ from a regular flashcard app?

A regular flashcard app is essentially a digital version of paper flashcards -- you create cards manually and review them in a fixed or random order. An AI flashcard app adds three critical capabilities: automatic card generation from source material (PDFs, images, audio), intelligent scheduling based on spaced repetition algorithms that adapt to your individual memory patterns, and continuous personalization that optimizes every aspect of the learning experience over time. The difference in learning efficiency is substantial, with studies showing 2-3x better retention rates compared to unscheduled review.

Can AI flashcard apps work with any subject?

Yes, but effectiveness varies by subject type. AI flashcard apps excel with fact-heavy subjects like medicine, law, language learning, history, and certification exams. They are also highly effective for STEM subjects where formulas, definitions, and processes need to be memorized. Subjects that require more creative or analytical thinking (essay writing, philosophical argumentation) benefit less from flashcard-based study but can still use them for foundational knowledge. For a comprehensive overview of how different student populations benefit, see our guide on the best AI flashcard apps for students.

How accurate are AI-generated flashcards?

Modern AI systems achieve over 95% accuracy for factual extraction from well-structured source material. However, accuracy can drop with handwritten notes, poorly formatted documents, or highly specialized technical content. The best practice is to review AI-generated cards before studying them, which typically takes only a few minutes for a deck of 50-100 cards. Most quality apps also allow easy editing and flagging of incorrect cards.

What is FSRS and why does it matter?

FSRS (Free Spaced Repetition Scheduler) is a next-generation spaced repetition algorithm that uses machine learning to predict exactly when you will forget each card. Unlike the older SM-2 algorithm used by most flashcard apps, FSRS learns your individual memory patterns from your review history and adjusts scheduling with much greater precision. The result is 20-40% fewer reviews needed to maintain the same retention rate, saving significant study time. You can learn more in our detailed article about how the FSRS 6.0 algorithm works.

How long does it take for the AI to learn my study patterns?

Most AI flashcard apps begin personalizing from your very first session, using population-level defaults as a starting point. After approximately 100-200 reviews (typically 3-5 study sessions), the algorithm has enough data to make meaningful individual adjustments. After 500+ reviews, the personalization becomes highly accurate, with the system understanding your memory characteristics, optimal study times, and subject-specific strengths and weaknesses. The more consistently you use the app, the better it becomes at scheduling your reviews.

Can I trust AI to create my entire study deck, or should I still make some cards manually?

The research-backed answer is to use a hybrid approach. AI is excellent for rapidly generating comprehensive coverage of source material -- it catches details you might miss and saves hours of manual card creation. However, the act of creating cards manually has its own learning benefit (the "generation effect" in cognitive science). The optimal strategy is to let AI generate the bulk of your deck from source material, then manually add cards for concepts you find personally confusing, connections between topics, and exam-specific material that the AI might not prioritize correctly.

Conclusion: The Future of Learning Is Adaptive

AI flashcard apps represent a convergence of multiple AI disciplines -- NLP, computer vision, speech recognition, and machine learning -- all focused on a single goal: making human memory more efficient. The technology stack behind these apps is genuinely sophisticated, but the end result is deceptively simple: you study less, remember more, and spend your time on the material that actually needs attention.

The gap between AI-powered and traditional flashcard methods will only widen as algorithms improve and personalization becomes more granular. If you are still creating and scheduling flashcards manually, you are working significantly harder than you need to.

Ready to experience the difference? Explore our complete guide to AI flashcard apps to find the right tool for your learning goals, or download Memly to see FSRS 6.0 and AI card generation in action.